The Hierarchical Guide to Improving Paid Ads Performance

Improving paid ads performance requires moving beyond random A/B testing and adopting a strict hierarchical approach. By prioritizing high-impact variables like product selection and offer structure before tweaking creative visuals, marketers can systematically compound their results.

Sections

Many marketers suffer from "death by testing," treating every variable in an ad account with equal importance. However, genuine performance improvements rarely come from testing button colors or minor copy tweaks in isolation. To significantly improve paid ads performance, campaigns should follow a structured decision tree where foundational elements are validated before cosmetic variables are introduced.

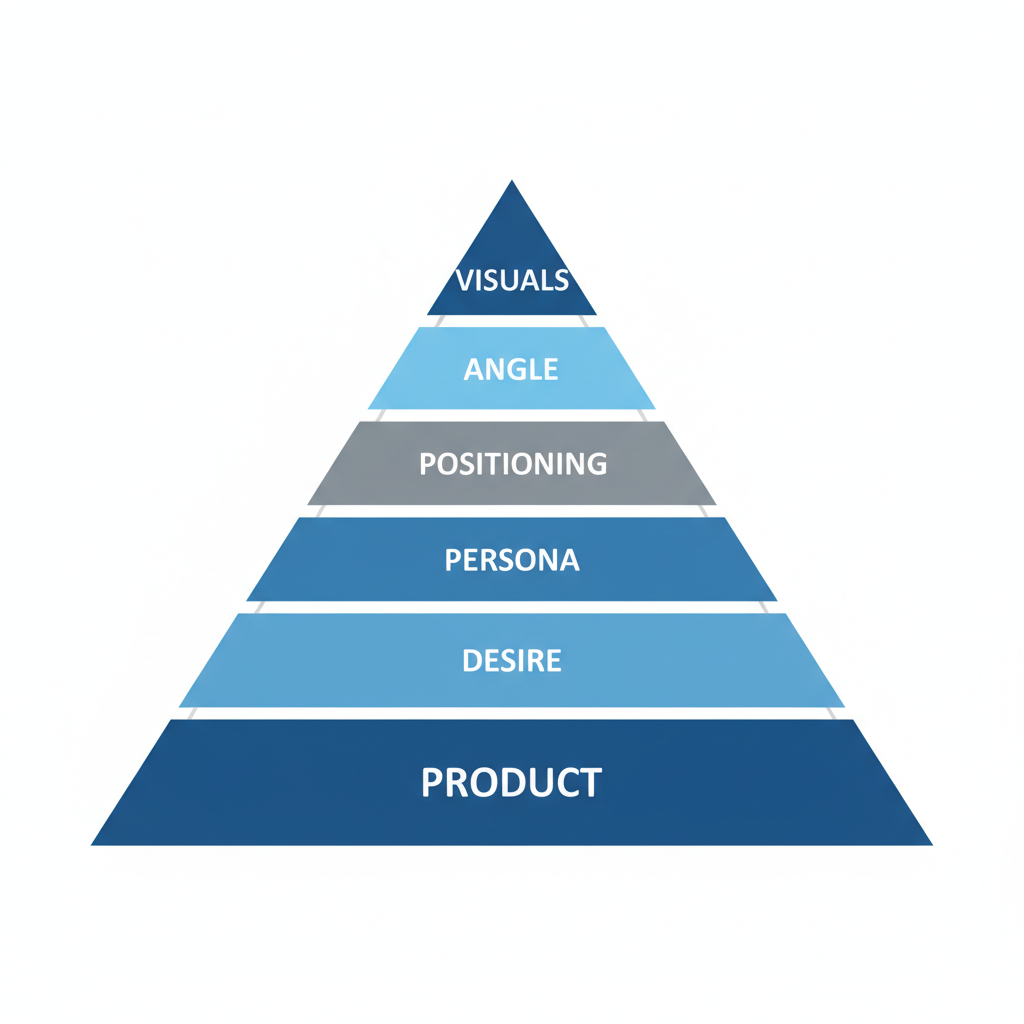

The Hierarchy of Ad Performance

Successful advertising accounts operate on a hierarchy of impact. Testing must proceed from the most fundamental business levers to the most granular creative executions. The logical progression for testing is: Product, Offer, Desire, Persona, Positioning, Angle, and finally, Visuals.

When performance stagnates, the solution is rarely to produce more video variations of a failing concept. Instead, marketers must move up the chain to verify if the product or offer is the actual bottleneck.

1. Product Selection: Hero vs. Laggards

The foundation of any high-performing ad account is the product itself. A common error is allocating test budget to slow-moving inventory ("ladenhueter") in an attempt to clear stock. This rarely works for cold acquisition.

Focus on Hero Products: Budget should be consolidated behind products with validated demand. These "Hero Products" act as the primary door openers. Secondary products should only be tested once the Hero Product's volume is maximized or if CRM data proves a specific item has a superior Customer Lifetime Value (CLV) or acts as a better entry point for new-to-brand customers.

2. Offer Engineering

Once the product is selected, the offer determines conversion efficiency. In a landscape of rising CPMs and economic tightening, offers must be "Cold Friendly"—designed to trigger impulse purchases from users who do not know the brand.

Effective offers generally fall into three categories:

- Maximum Savings: Bundles or volume discounts that rationalize the purchase through value.

- Maximum Results: Complementary products sold together to ensure the customer achieves the desired outcome faster.

- Risk Reversal: Offers focused on anti-disappointment, such as guarantees or trial periods that lower the barrier to entry.

3. Desire and Market Sentiment

Even a great product with a strong offer can fail if it misaligns with the current market "vibe" or macro-sentiment. This layer of testing involves matching the narrative to the current consumer mindset.

For example, during periods of high inflation, a "luxury status" angle may underperform compared to a "smart investment" or "long-term value" angle. Seasonality also dictates desire; a fitness product sold in January targets the "New Year, New Me" desire, while the same product in June targets "Summer Body" readiness. Aligning with the overarching market wave allows ads to ride existing momentum rather than fighting against it.

4. Persona and Positioning

Diversifying ad accounts often requires selling the same product to different people for different reasons. This is the "Persona" layer. A health supplement might be sold to one persona for athletic performance, to another for gut health, and to a third as a gift for a partner.

Modern ad platforms use AI to segment audiences effectively, but they require distinct inputs. By feeding the algorithm different positioning angles—such as a medical authority angle versus a lifestyle convenience angle—marketers can unlock entirely new pools of inventory within the same platform.

5. Visual Execution and Creative Iteration

Only after the Product, Offer, Desire, and Angle are validated should the focus shift to visual testing. This is the final step, not the first.

Once a winning script or concept is identified, it can be iterated into dozens of formats to combat ad fatigue. A single winning angle can be produced as:

- UGC (User Generated Content) testimonials

- Founder-led explainers

- AI voiceover demonstrations

- Static image ads

- Carousel slides

This allows for horizontal scaling of a proven concept without reinventing the messaging wheel every week.

Practical Workflow for Systematic Testing

To implement this hierarchy and improve paid ads performance, follow this step-by-step workflow:

- Step 1: Audit the Hero Product. Identify the SKU with the highest organic demand and best unit economics; pause testing on low-velocity items.

- Step 2: Develop Three "Cold Friendly" Offers. Create variations based on savings, results, and risk reversal to see which yields the highest Average Order Value (AOV) and conversion rate.

- Step 3: Align with Macro-Sentiment. Review current market trends (seasonality, economy) and adjust the primary "Desire" hook to match.

- Step 4: Diversify Personas. Draft scripts that address at least two distinct user archetypes (e.g., the end-user vs. the gift-giver).

- Step 5: Iterate Visuals. Take the winning message and produce it in 5-10 different visual formats (video, static, lo-fi, hi-fi).

- Step 6: Review Weekly. Analyze which layer of the hierarchy is bottling performance and dedicate the next sprint to fixing that specific layer.

Common Mistakes in Ad Testing

Avoid these pitfalls to ensure consistent performance improvements:

- Testing in the Wrong Order: Obsessing over video hooks while the offer is weak or the product is irrelevant to cold traffic.

- Death by Testing: Running too many insignificant tests simultaneously, preventing any single variable from reaching statistical significance.

- Ignoring CRM Data: Promoting products that have high initial conversion but poor retention or upsell potential.

- Founder Bias: Launching new products based on internal assumptions rather than post-purchase survey data from existing customers.

- Single-Format Reliance: Failing to adapt a winning script into different visual formats (e.g., only running video and neglecting static images).

By adhering to a strict testing hierarchy, marketers can diagnose issues faster and scale budgets with confidence, knowing that the foundation of the campaign is solid.

For teams looking to refine their testing roadmap, platforms like AdLibrary.com provide access to a vast database of active ads, allowing marketers to research how competitors structure their offers, angles, and creative formats across different industries.

Related Resources

Further Reading

Related Articles

How to Scale Paid Ads: A Strategic Guide for Growth

Learn the core principles of scaling paid ads, including creative iteration, funnel design, and leveraging proof over promises to drive profitable growth.

Facebook Ad Optimization in 2026: The Sequenced Playbook

Seven domains of Facebook ad optimization in priority order: account structure, CAPI signal, creative testing, audience, bidding, fatigue management, and attribution. Concrete thresholds throughout.

Strategic Facebook Ads Management: A Comprehensive Guide for 2026

Master Facebook ads management with structured workflows for creative research, campaign setup, optimization, and scaling. The complete 2026 practitioner's guide.

A Practical Guide to Competitor Ad Analysis

Learn a systematic approach to analyzing competitor ads. Turn creative intelligence into actionable insights and structured campaign testing workflows.